AI Model Training & Inference

What Unsloth Offers for Local Model Training and Inference

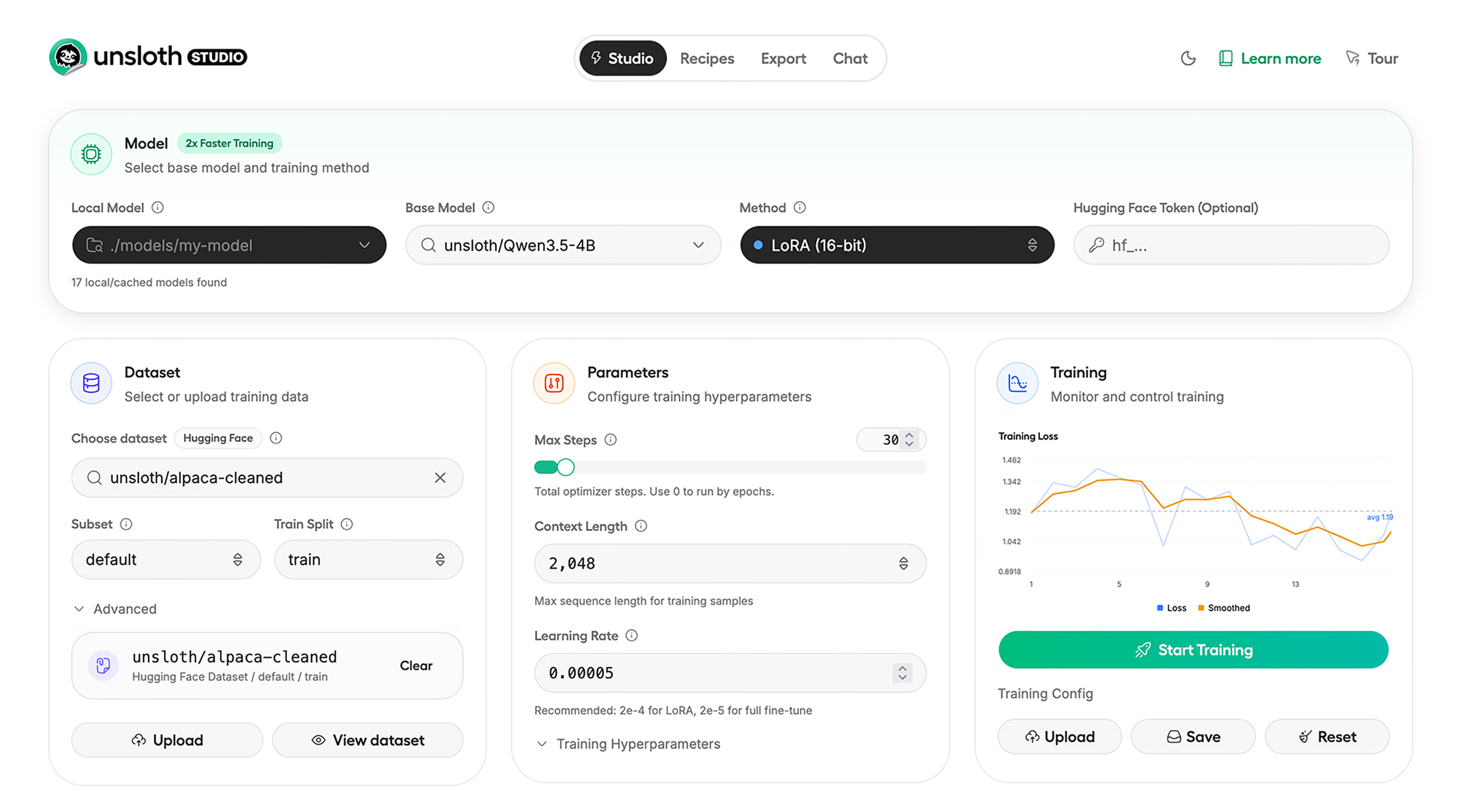

Unsloth combines a local-first interface, model training workflows, dataset preparation, and export tooling so teams can run and fine-tune open models without defaulting to hosted AI platforms.

Unsloth: Local AI Training and Inference Stack

Overview

Unsloth is positioning itself as a practical stack for teams that want to run and fine-tune open models locally instead of building every workflow around hosted APIs. Based on its public website, documentation, and GitHub repository, the project is structured around two complementary layers: Unsloth Studio for a local UI-driven workflow and Unsloth Core for code-first training and inference workflows.

This split allows both non-technical users and developers to work efficiently, whether through a graphical interface or through automated scripts and notebooks.

Product Architecture

The product story is centered on local execution with minimal infrastructure friction. Unsloth Studio enables users to run GGUF and safetensors models locally, compare outputs side by side, upload files into chat workflows, and expose an OpenAI-compatible API from a local environment.

On the training side, Unsloth provides Data Recipes to convert raw files into training-ready datasets, along with observability tools and export options for GGUF and safetensors formats.

Performance and Efficiency

A major part of Unsloth’s positioning is efficiency. The project claims support for training 500+ models at up to 2x faster speeds with up to 70% lower VRAM usage. Reinforcement learning workflows, particularly GRPO-based approaches, are also described as having reduced memory overhead.

These figures should be treated as project claims rather than independently verified benchmarks, but they explain the platform’s growing adoption among developers working with limited hardware.

Platform Support

Unsloth supports a wide range of environments, including Windows, Linux, WSL, macOS, Docker, and Python-based setups. This broad compatibility helps reduce setup friction, which is often a major barrier in local AI workflows.

By consolidating inference, dataset preparation, fine-tuning, export, and monitoring into a single system, Unsloth aims to simplify the entire local AI lifecycle.

Workflow Capabilities

Unsloth is more than just a local chat tool. Its Data Recipes feature transforms inputs like PDFs, CSV files, DOCX files, and JSON into structured training datasets. Studio adds capabilities such as model comparison, tool calling, code execution, and interactive chat workflows.

Export support for runtimes like Ollama, llama.cpp, and vLLM ensures portability and avoids vendor lock-in.

Limitations and Considerations

Unsloth Studio is currently in beta, and platform support is not uniform across all training scenarios. The project also maintains a high number of active issues, which is common for rapidly evolving AI infrastructure but still relevant for production use.

Teams should validate compatibility with their specific model requirements, hardware constraints, and deployment targets before committing to the platform.

Conclusion

Unsloth stands out by compressing a large portion of the local AI lifecycle into a single stack. For solo developers, AI teams, and organizations focused on infrastructure efficiency, this can significantly reduce the complexity of moving from experimentation to production-ready workflows.